Miles D. Williams, Visiting Assistant Professor of Data for Political Research

[Note: This is a version of a post Dr. Williams did for his Substack Foreign Figures]

AI (shorthand for all the large language models at large these days) is on everyone’s minds in higher education—well, really at every level of education, and in anything remotely connected to “knowledge” work, the corporate world, the public and private sectors, and basically everywhere else. AI is a big deal.

It’s no wonder. AI raises grand questions about ethics, what it means to be human, and whether machines can become sentient (though if someone knows for sure what “sentient” means and how to measure it, please let me know). Its day-to-day use in higher education raises more practical questions, however, such as whether and how students should use AI in their coursework. That’s a question that faculty and administrators are still grappling with, and from where I sit, I see little evidence that we in higher education will coalesce around a definitive answer soon. I certainly have my own strongly held opinion—basically I think most students should develop skills without or with only limited AI assistance before educators can responsibly grant clearance for more general kinds of AI use. But whether I support it or not, students are using AI, and often without reasonable guardrails.

So exactly how widespread is AI use among Denison students? What do students use it for? And do any of them have the same concerns I do about AI’s impact on education?

How many students use AI?

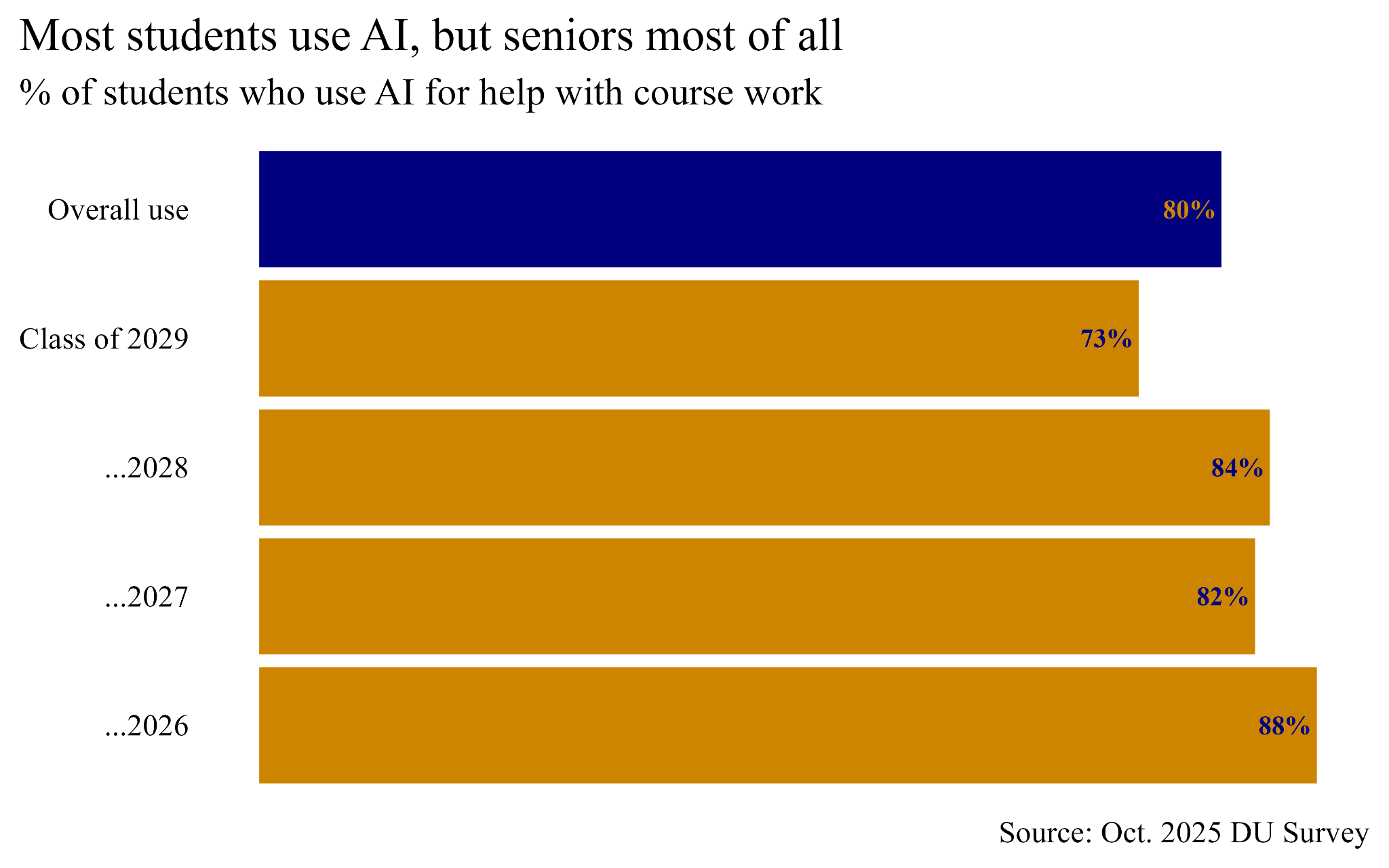

Of the nearly 420 students who took the 2025 October survey (out of the more than 2,300 enrolled at Denison), nearly 80% reported that they used AI for coursework in some capacity. That’s a strong majority. It’s also a hair more than the use rate of 78% reported back in the spring semester, and much higher than the 53% use rate reported in fall 2024.

Given the growth in usage in the last academic year, I’m actually surprised that the current rate isn’t higher than 80%. Either some of the remaining 20% of students lied, or a big upsurge is coming next semester after freshmen have better acclimated to this brave new AI world. My hunch is that the latter explanation is the better of the two, and the data backs me up, which you can see in the figure below. When I broke the data down by year of expected graduation, I found that freshmen reported the lowest AI use rate at 73%. Seniors had the highest reported rate, coming in at nearly 88%. I bet that come the spring semester, some AI holdouts among the freshmen will cave, which will bring up the overall average.

How do students use AI?

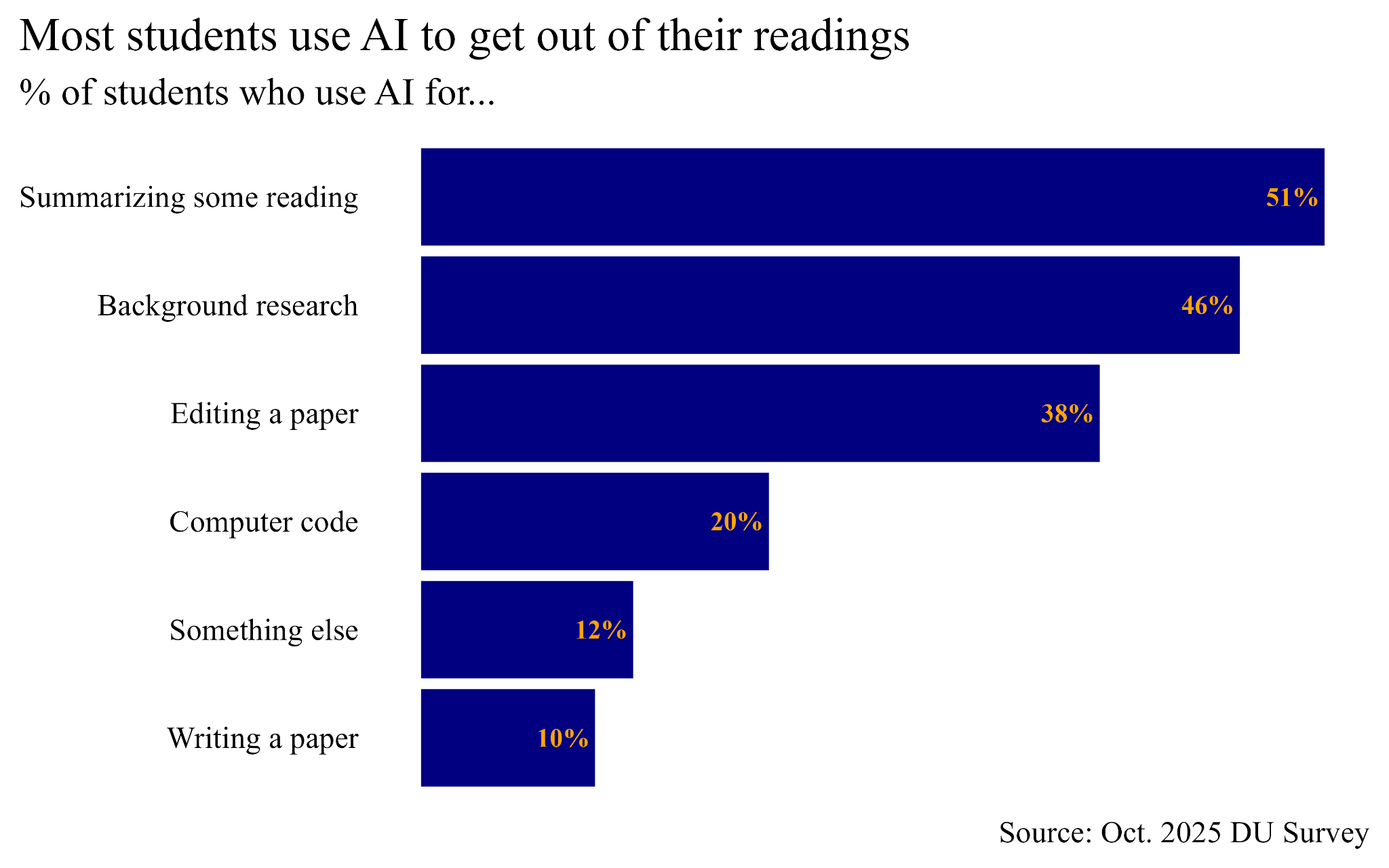

Consistent with results from the spring survey, the most common way students report using AI for coursework is summarizing readings. The survey asked students to indicate whether they use AI to help with 6 different tasks, and they could select none or all of them. A slim majority of 51% said they use AI to summarize their readings, 46% for background research, 38% for editing, 20% for coding, and only 10% for writing an entire paper. 12% said they used it for something else. I checked, and these numbers track closely with the spring survey, so what students claim to use AI for has remained pretty stable.

I actually find it perfectly understandable that most students use AI to cut down on their reading load. Students have tried all kinds of ways to avoid their assigned readings since time immemorial. AI provides a new way to pull an old maneuver.

What amazes me, though, as it also did when I saw the spring survey results, is that only 1 out of 10 students say they use AI to write papers. Do students really exercise this kind of self-control?

As heartening as this finding seems, I should caveat that not all students take classes that include writing papers. I don’t actually know from this data whether 10% of students writing papers use AI, or if 10% of students write papers and all of them offload the task to AI. The truth lies somewhere in the middle, but without data all I can really go on is vibes. As someone who must begrudgingly grade some AI-generated papers (or at least some partially written by AI), I feel confident saying the rate is much higher than 10%, but also much less than 100%.

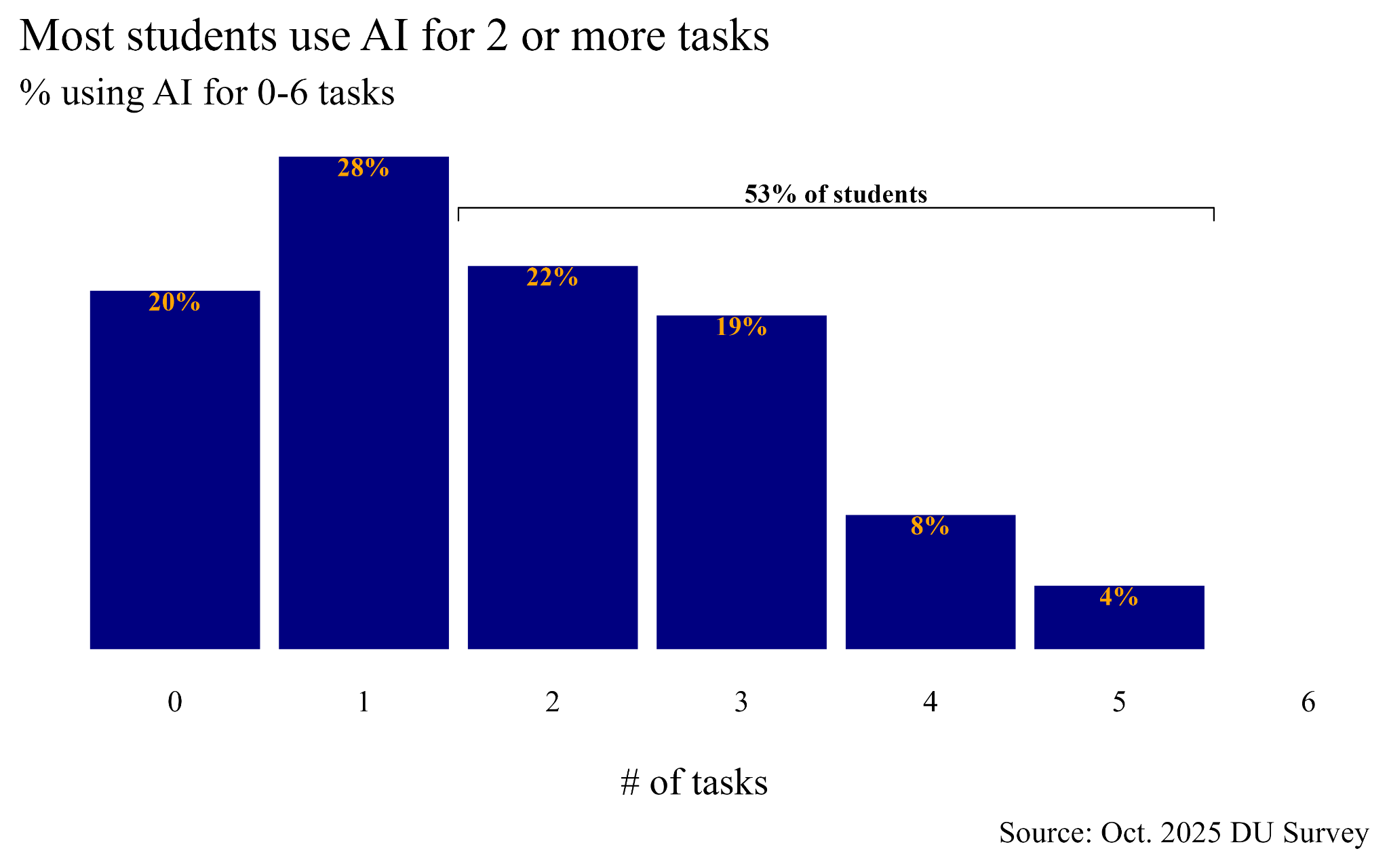

One other thing I found in the data is that students who use AI for one task tend to use it for many. When you count up the number of different tasks students say they use AI to help with, 53% say they use it for 2 or more. You can see this in the figure below. Use does taper for higher numbers of tasks, though. Very few use AI to help with 4 or 5 different tasks, and none use it for 6. But only 28% use AI for help with only one thing.

How do students feel about AI use?

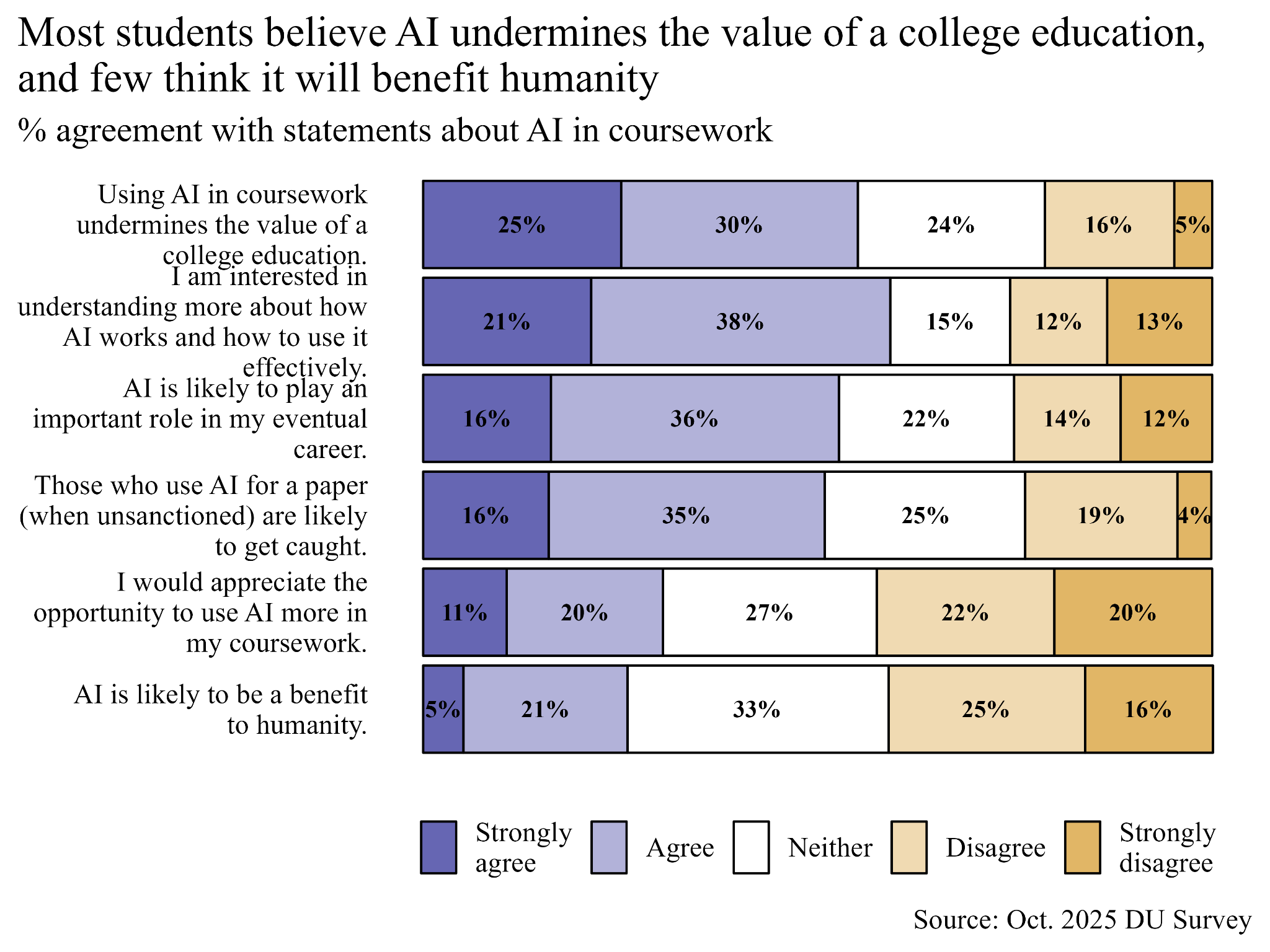

I always find it interesting when unexpected discrepancies show up in data, and when I moved from looking at stats on student AI use to AI attitudes I was not disappointed. Students were shown six different statements about AI and asked to indicate their level of agreement with each. I summarized the distribution of student responses in the figure below. Even though 80% of students say they use AI for help with coursework, 55% agree that using AI in coursework undermines the value of a college education. Also, only 26% agree that AI will be of benefit to humanity. At the same time, 59% have an interest in understanding how AI works so they can use it more effectively, and 52% think it will play an important role in their future career, but only 31% want more opportunities to use AI in their coursework. Contradictions across the board!

By the way, a slim majority of 51% agreed that professors can catch when students use AI to write papers. 25% felt uncertain, and 23% disagreed. So Denison professors, take advantage of the fact that only about a quarter of students think they can get away with writing an AI-generated paper.

The AI use paradox

The data tells a paradoxical story. A majority of students use AI for coursework, but a majority also think AI undermines their education. At the same time, many think they’ll need to use AI in their future career and want to better understand how it works and how to use it most effectively. But few want more opportunities to use AI as a part of their curriculum.

My take on these contradictions is that student AI use, at least at Denison, has much more to do with extrinsic pressure than internal desire. Students don’t necessarily want to use AI in their coursework—they understand that it harms their education—but they also don’t see an alternative. In some ways, this gives me hope. Most students would prefer NOT to use AI as a part of their coursework. I would prefer they didn’t either, so I’m glad to see this result.

But it’s going to be an uphill battle to make classrooms AI-free zones. In game theoretic terms, students are stuck in Pareto inferior equilibrium where using AI is their only best option—one that costs them long-term benefits but yields short-term gains. They understand that a Pareto improving alternative would be to limit their AI use and do their coursework themselves. But they know this isn’t just a matter of individual choice; it’s a collective action problem. The more other students use AI to keep on top of their assignments and gain an edge in academic performance, the more a single student may fear falling behind. So, just like in the classic Prisoners’ Dilemma, the mutually best solution is also the one that is collectively suboptimal.

I really only see two solutions to this problem. Either colleges do a better job of changing the structure of student learning environments by limiting access to AI, or students need to develop a longer time horizon to see past the short-term benefits of AI use to the long-term benefits of deferred use.

I doubt either solution has legs.

Miles D. Williams (“DrDr”) is an avid gym rat and wannabe metal guitarist who teaches courses for Data for Political Research. He writes about data and international relations for his bi-weekly newsletter, Foreign Figures.